Galaxy A-Series Gemma3 Pipeline Benchmark

- TecAce Software

- Apr 14

- 3 min read

Why This Test Matters

One SoC generation changed inference speed by 29%. We ran Gemma3 270M INT8 on four Galaxy A-series devices to find out where on-device LLM becomes practically usable.

We tested gemma-3-270m-it-int8 via MediaPipe CPU backend on the Galaxy A16, A26, A36, and A56, measuring latency, token throughput, memory, and accuracy across 25 prompts. We also compared parallel (all 4 devices simultaneously) vs serial (each device independently, 2 runs) execution to verify whether concurrent operation affects results.

The verdict: execution mode made no difference. SoC generation made a large one.

Performance Rankings — SoC Generation Drives Speed

The A56 finished in 11,593ms — 29% faster than the A16 at 16,430ms. This gap comes entirely from chip architecture, not software configuration.

A56 Average Latency 11.6s · Decode TPS 23.12 — Best across all four devices

The table below shows key inference metrics from the parallel test (25 prompts per device). Decode TPS reflects conversational speed; TTFT reflects responsiveness to the first token.

Device | Avg (ms) | Median (ms) | Decode TPS | Prefill TPS | TTFT (ms) | Init (ms) |

A16 | 16,430 | 6,864 | 15.39 | 24.25 | 812 | 1,448 |

A26 | 13,560 | 5,610 | 18.60 | 36.96 | 539 | 1,279 |

A36 | 13,974 | 5,946 | 17.46 | 37.82 | 512 | 1,219 |

A56 | 11,593 | 3,795 | 23.12 | 52.15 | 371 | 966 |

The A16 (red) at 16.4s average may cause user drop-off in real-time chat scenarios. The A26/A36 hit a practical mid-range at 13.5–14.0s. The A56's 3.8s median enables genuinely interactive responses.

[Fig 1] Average Latency by Device (seconds)

Memory — Qualcomm is 14% More Efficient

All devices use 415–482MB of native memory. The A36 (Qualcomm SM6475) achieves the lowest footprint — smaller than the fastest device, A56.

A36 (Qualcomm SM6475) avg 414.7MB — 14% lower than A16 (482.4MB)

Qualcomm's memory allocator handles LLM layer loading more efficiently than Samsung Exynos in this benchmark. We expect this gap to widen when switching to NPU backend.

Validation — Model Accuracy is Hardware-Independent

We evaluated 14 prompts with ground-truth answers. All four devices returned an identical 50.0% pass rate — the failures are model-level limitations of 270M parameters, not device issues.

50.0% pass rate (7/14) across all devices — Structured output & code: 100%, Math & reasoning: weak

Structured output (JSON) and code generation scored 100%. The factual_02 failure (H₂O vs H2O) is a validator normalization issue — not a model error. With NFKC normalization applied, the overall rate rises to 57%. Math and reasoning failures reflect the 270M architecture ceiling.

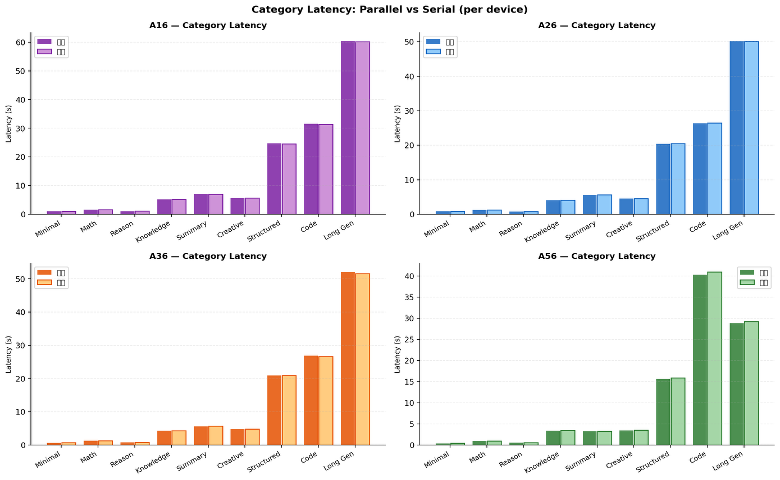

[Fig 2] Category Latency — Parallel vs Serial (all devices)

Parallel vs Serial — Concurrent Execution Has No Effect

Running all 4 devices simultaneously did not affect per-device inference performance. Every device showed less than ±1.5% variance between parallel and serial.

The serial test ran each device independently for two identical rounds (50 results per device). Run-to-run reproducibility was within 2% for A16, A26, and A36. The A56 showed 3.1% inter-run variance, likely due to an ~8,355-second session gap — not a platform issue.

Device | Parallel (ms) | Serial (ms) | Delta | Delta % | Verdict | Run1 vs Run2 |

A16 | 16,430 | 16,365 | -65 | -0.4% | OK | -129ms / -0.8% |

A26 | 13,560 | 13,584 | +24 | +0.2% | OK | +48ms / +0.4% |

A36 | 13,974 | 13,895 | -79 | -0.6% | OK | -157ms / -1.1% |

A56 | 11,593 | 11,770 | +177 | +1.5% | OK | +354ms / +3.1% |

The MediaPipe CPU backend operates in isolated process space. Parallel benchmarking is reliable for device comparison; serial dual-run is recommended only for reproducibility certification.

[Fig 3] Parallel vs Serial Average Latency

Three Deployment Rules

Rule 1: Speed → A56. Memory Efficiency → A36.

The A56 (Exynos s5e8855) is the only device delivering interactive-grade inference at 23.12 Decode TPS. If memory is the constraint, the A36 (Qualcomm SM6475) saves 14% memory with only a marginal speed penalty.

Rule 2: Parallel Testing is Reliable.

Concurrent device execution introduces no measurable interference. Future benchmarks can safely use parallel mode. Serial dual-run is only needed for reproducibility certification.

Rule 3: Math and Reasoning Need a Larger Model.

The 50% pass rate is a 270M parameter ceiling, not a device or runtime issue. For accuracy-critical scenarios, evaluate Gemma3 1B or larger. Structured output and code generation are production-ready at this model size.

Scenario | Recommended | Rationale |

Real-time chat / interactive UX | Galaxy A56 | Decode TPS 23.12, median 3.8s — interactive-grade |

JSON / structured output | All devices | 100% accuracy — hardware-independent |

Code generation | A36–A56 preferred | A16 code tasks avg 31,515ms — impractical |

Memory-constrained envs | Galaxy A36 | Qualcomm SM6475 lowest memory at 414.7MB |

Complex math / reasoning | Upgrade model | Gemma3 1B+ recommended; 270M structural limit |

Comments