On-device LLM

Edge AI, On-device AI, LLM, Mobile AI

Private, fast, and cloud-free AI that works wherever you are.

On-device LLM solution brings the power of generative AI to mobile, wearable, and offline environments. We design and deploy ultra-lightweight sLLMs tailored to low-connectivity use cases like retail stores, embedded kiosks or mobile/wearable devices. This enables offline LLM QA and privacy-preserving validation, ideal for sensitive environments and real-time edge evaluation workflows.

Key Capabilities

On-device AI inference without cloud dependency

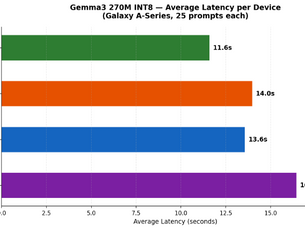

Optimized performance for mobile and edge devices

Low-latency real-time response generation

Secure data processing on local hardware

Efficient resource and power management

Related Insights

Explore supporting case studies, benchmarks, and technical research behind our AI solutions.

![[On-Device AI Chatbot] Part 3: Core Technologies of Mobile AI: Quantization and NPU Optimization](https://static.wixstatic.com/media/2ea07e_08ed983f9efb45fe9129e06967a91163~mv2.png/v1/fill/w_334,h_250,fp_0.50_0.50,q_35,blur_30,enc_avif,quality_auto/2ea07e_08ed983f9efb45fe9129e06967a91163~mv2.webp)

![[On-Device AI Chatbot] Part 3: Core Technologies of Mobile AI: Quantization and NPU Optimization](https://static.wixstatic.com/media/2ea07e_08ed983f9efb45fe9129e06967a91163~mv2.png/v1/fill/w_306,h_229,fp_0.50_0.50,q_95,enc_avif,quality_auto/2ea07e_08ed983f9efb45fe9129e06967a91163~mv2.webp)